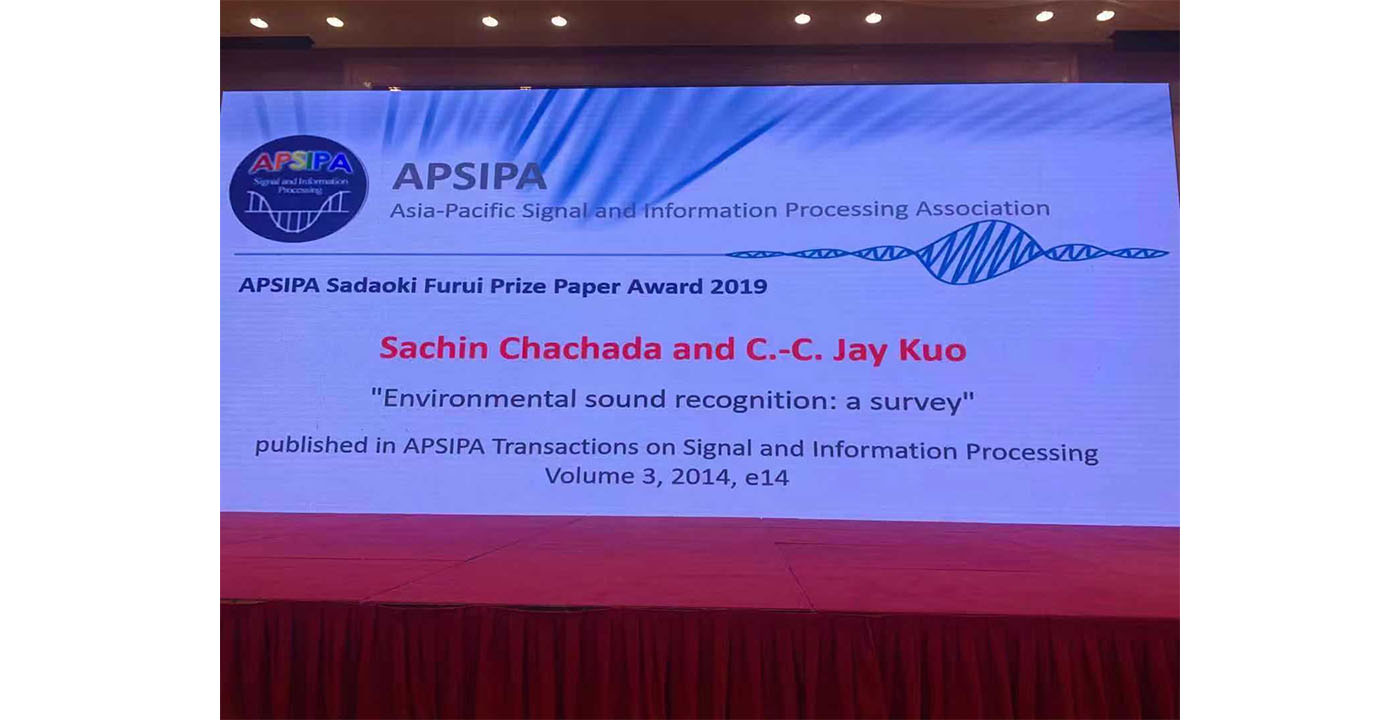

MCL Work Won APSIPA Sadaoki Furui Paper Award

Dr. Sachin Chachada, a former MCL alumnus, and Professor C.-C. Jay Kuo received the 2019 Sadaoki Furui Paper Award at the open ceremony of the 2019 APSIPA ASC held in Lanzhou, China, on November 19 for their paper below:

Sachin Chachada and C.-C. Jay Kuo, “Environmental sound recognition: a survey,” Published online: 15 December 2014, e14, APSIPA Trans. on Signal and Information Processing.

The paper has been cited by 107 in Google Scholar, the number of downloads in 2019 (to end of Sept.) is 1523. The abstract of the paper is given below.

Although research in audio recognition has traditionally focused on speech and music signals, the problem of environmental sound recognition (ESR) has received more attention in recent years. Research on ESR has significantly increased in the past decade. Recent work has focused on the appraisal of non-stationary aspects of environmental sounds, and several new features predicated on non-stationary characteristics have been proposed. These features strive to maximize their information content pertaining to signal’s temporal and spectral characteristics. Furthermore, sequential learning methods have been used to capture the long-term variation of environmental sounds. In this survey, we will offer a qualitative and elucidatory survey on recent developments. It includes four parts: (i) basic environmental sound-processing schemes, (ii) stationary ESR techniques, (iii) non-stationary ESR techniques, and (iv) performance comparison of selected methods. Finally, concluding remarks and future research and development trends in the ESR field will be given.