MCL Research Presented at WACV 2020

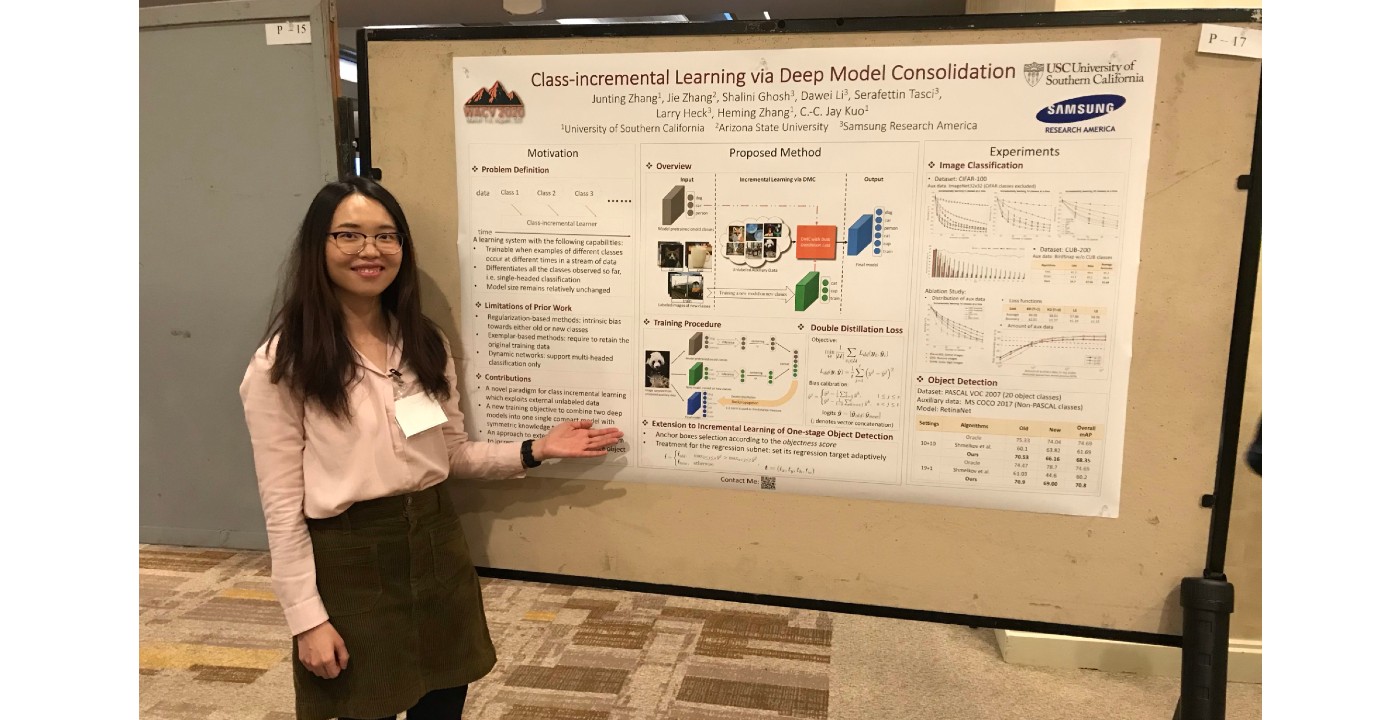

MCL member, Junting Zhang presented her paper at 2020 Winter Conference on Applications of Computer Vision (WACV ’20), in Snowmass village, Colorado. The title of Junting’s paper is “Class-incremental Learning via Deep Model Consolidation”, with Jie Zhang, Shalini Ghosh, Dawei Li, Serafettin Tasci, Larry Heck, Heming Zhang, C.-C. Jay Kuo as co-authors. Here is a brief summary of Junting’s paper:

“Deep neural networks (DNNs) often suffer from “catastrophic forgetting” during incremental learning (IL) — an abrupt degradation of performance on the original set of classes when the training objective is adapted to a newly added set of classes. Existing IL approaches tend to produce a model that is biased towards either the old classes or new classes, unless with the help of exemplars of the old data. To address this issue, we propose a class-incremental learning paradigm called Deep Model Consolidation (DMC), which works well even when the original training data is not available. The idea is to first train a separate model only for the new classes, and then combine the two individual models trained on data of two distinct set of classes (old classes and new classes) via a novel double distillation training objective. The two existing models are consolidated by exploiting publicly available unlabeled auxiliary data. This overcomes the potential difficulties due to the unavailability of original training data. Compared to the state-of-the-art techniques, DMC demonstrates significantly better performance in image classification (CIFAR-100 and CUB-200) and object detection (PASCAL VOC 2007) in the single-headed IL setting.”

Junting was also invited to attend the WACV 2020 Doctoral Consortium (WACVDC) to present her research and progress to date. She also shared this experience with us:

“It was a great opportunity to interact with experienced researchers in [...]