Congratulations to Kevin Yang for Receiving His PhD Degree

We would like to congratulate Kevin Yang for receiving his Ph.D. degree during the Viterbi Hooding Ceremony held on May 13, 2026, at the Bovard Auditorium. Here is a brief sharing of his Ph.D. experience at MCL:

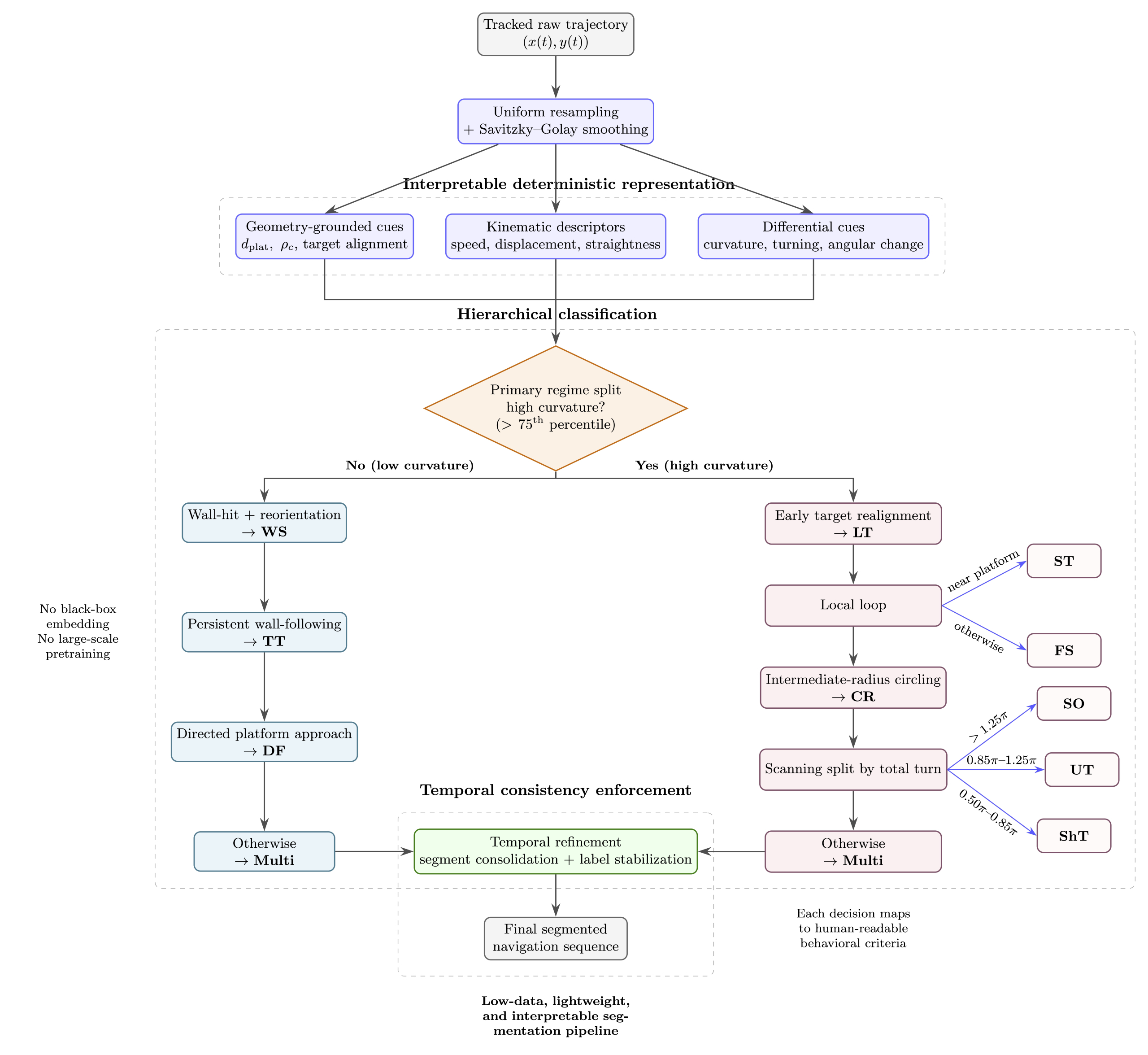

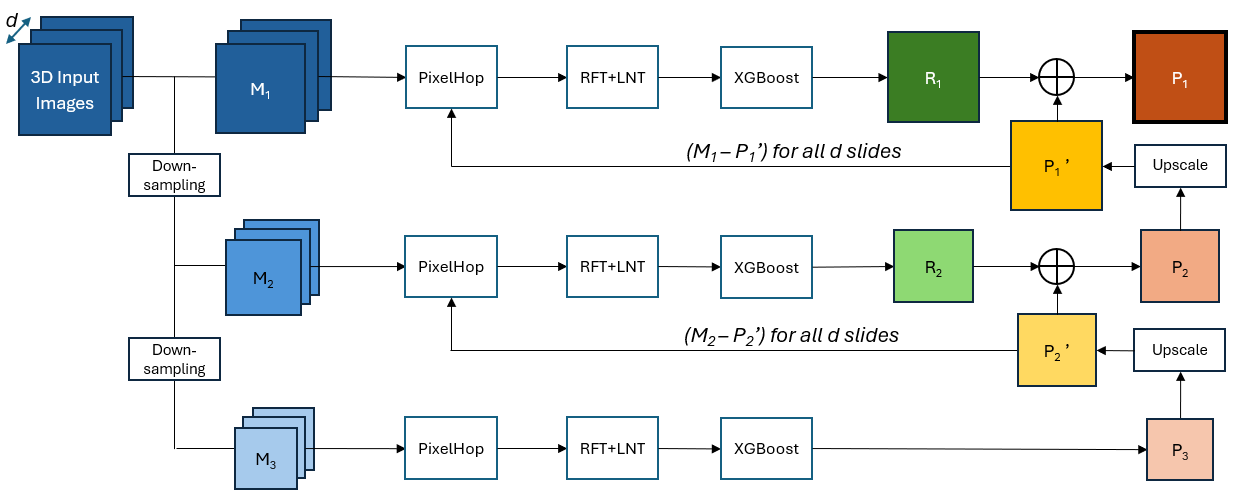

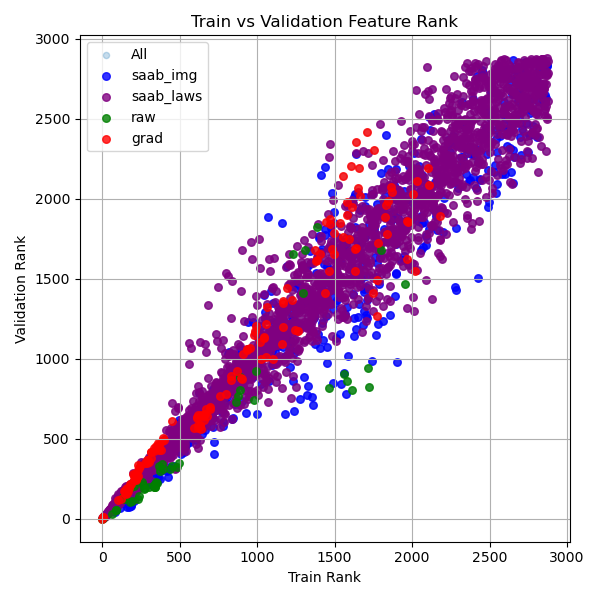

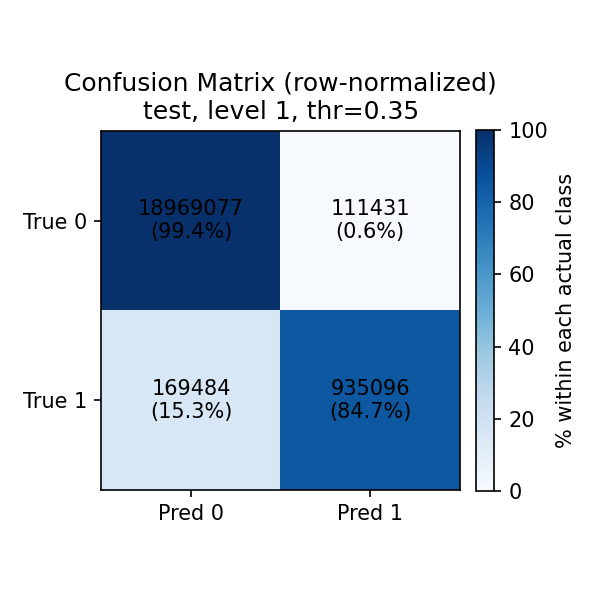

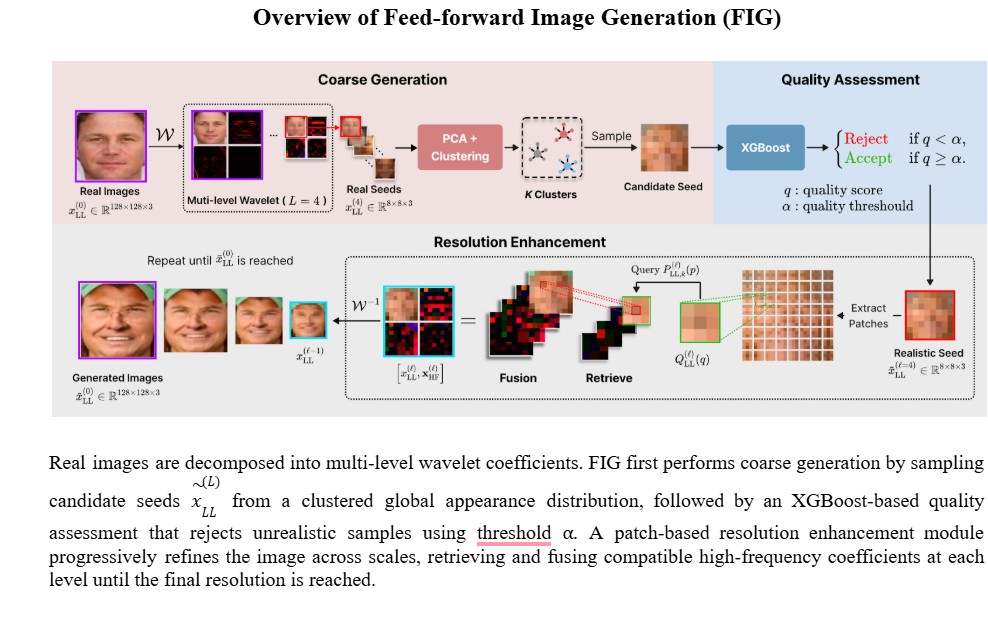

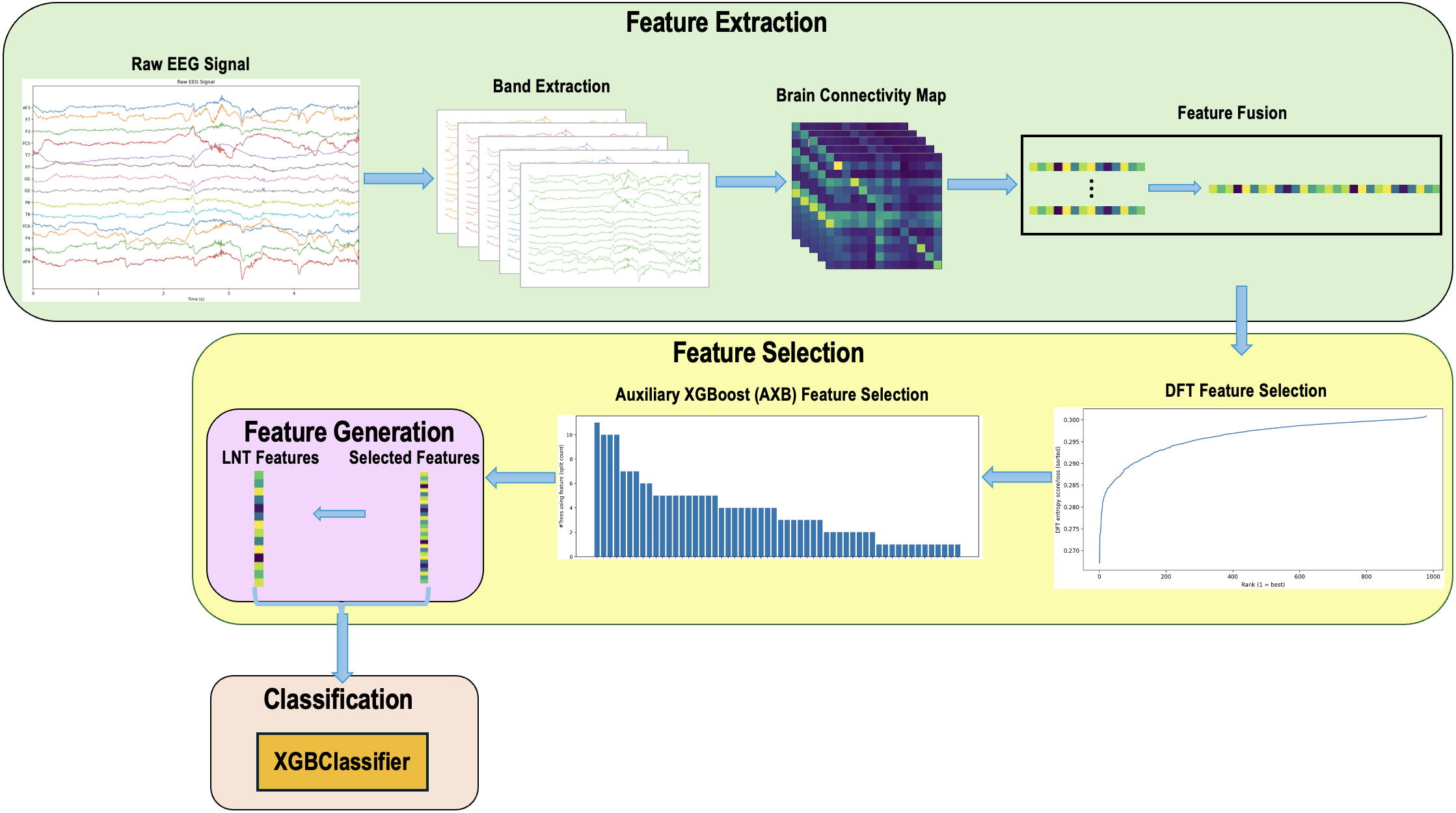

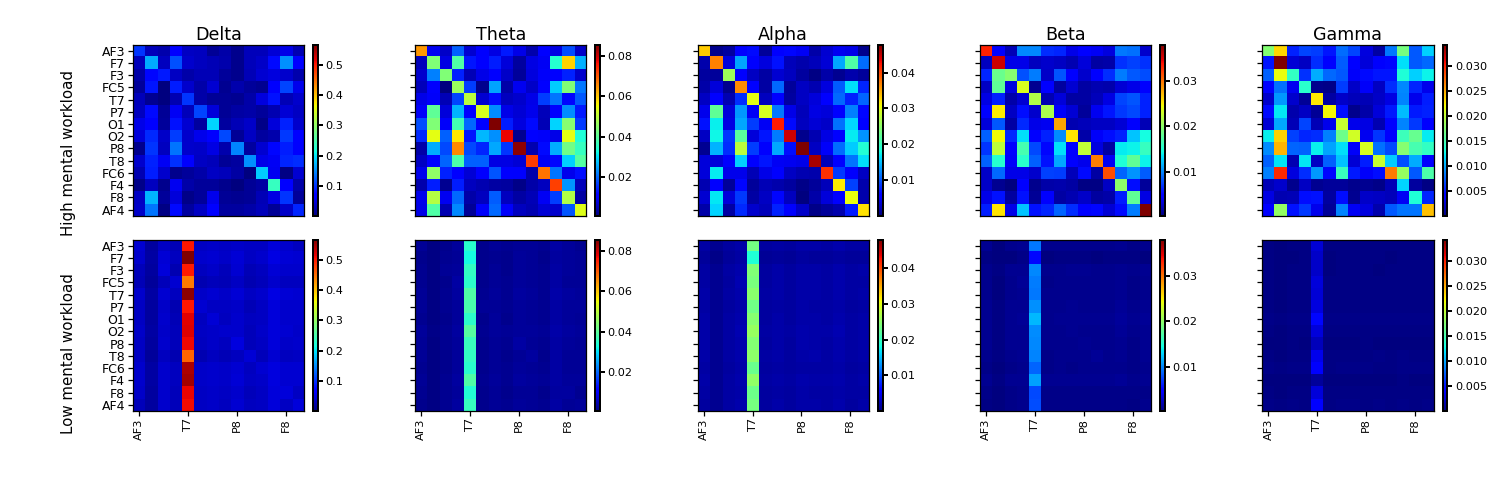

My Ph.D. journey at the University of Southern California has been both challenging and rewarding. My dissertation, titled “Interpretable and Efficient Multimodal Data Interplay: Algorithms and Applications,” focuses on developing machine learning methods that better connect and understand information across different modalities, such as text and images. Through this research, I explored ways to improve the interpretability and efficiency of multimodal systems while designing algorithms that better align with human perception and understanding. This experience strengthened my passion for artificial intelligence and its potential to create meaningful real-world impact.

I am deeply grateful to my advisor, Professor C.-C. Jay Kuo, for his continuous guidance, encouragement, and support throughout my Ph.D. journey. His mentorship has profoundly influenced both my research perspective and personal growth. I would also like to sincerely thank my labmates for their collaboration, friendship, and inspiring discussions along the way. The supportive environment in our lab made challenging moments manageable and accomplishments even more meaningful. I am excited to continue this journey by joining LinkedIn as an AI Engineer in June 2026, where I hope to contribute to the development of impactful AI systems and applications. I will always cherish the experiences, lessons, and relationships built during my Ph.D. years.