Author: Shangwen Li and C.-C. Jay Kuo

Research Problem

The text based information retrieval techniques has achieved significant progress over the last decades, resulting huge search engine company like Google. However, the image based retrieval problem is still an open field with no perfect solution. Currently, the content based image retrieval methods attempt to extract low-level feature (including shape, color, texture etc.) and search for related images based on the similarity of features. However, this is rather unreliable since one object will have different look under different scenario. Another way to handle this problem would be first annotating the image with key concepts within the images, and then using text based search method to retrieve relevant information. However, manually labeling of images is a tremendous time consuming activity. Consequently, automatic annotation becomes a potential way of solving image retrieval problem.

Main Ideas

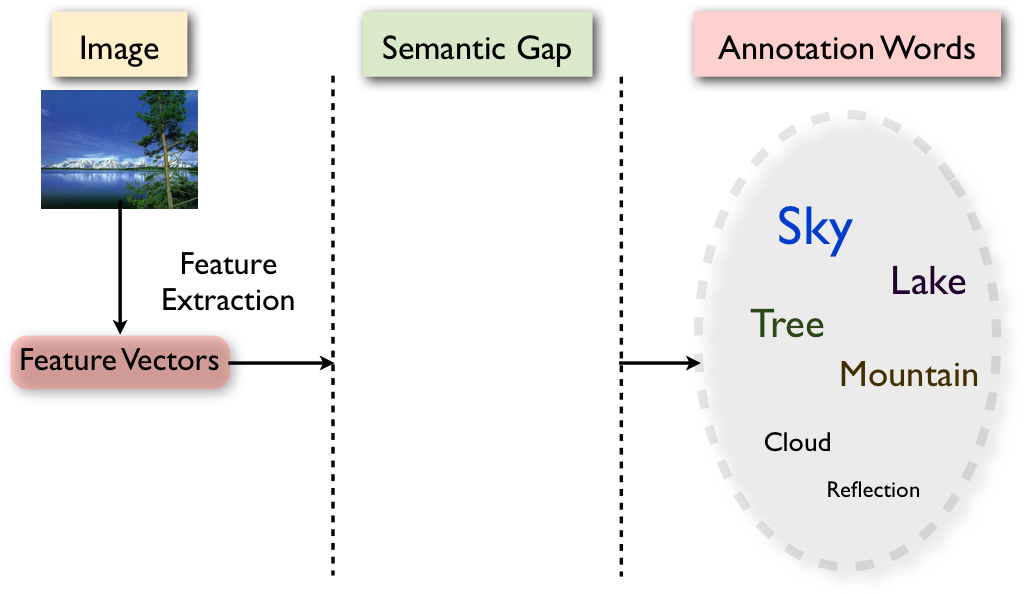

Current I am still searching for a good solution to the automatic image annotation problem. As shown in Fig. 1, the biggest challenge lies in the image annotation is how we can link the low-level features and high-level linguistic concept together. In current literature, there are no satisfied solutions for this. Typically, the F measure of all proposed algorithms are lower than 0.5. My way of solution aims at first trying to annotate the image with some metadata, like human/non-human, indoor/outdoor, visual salient or not etc. By first categorizing the image into some coarse classes, we can apply different methods to each class accordingly.

Innovations

Currently, lots of image annotation algorithms are trying to utilize probabilistic topic model to link the features and concept [1][2][3][4]. There are also other methods that tried to use KNN method to solve the problems [5]. However, none of them are trying to divide the huge problem into several sub problems.

Future Challenges

For the future, there are two directions to do.

- Find ways to define the macro attributes of image.

- Find ways to annotate the images with metadata.

References

- [1] Blei, David M., and Michael I. Jordan. ”Modeling annotated data.” Proceedings of the 26th annual international ACM SIGIR conference on Research and development in information retrieval. ACM, 2003.

- [2] Jeon, Jiwoon, Victor Lavrenko, and Raghavan Manmatha. ”Automatic image annotation and retrieval using cross-media relevance models.” Proceedings of the 26th annual international ACM SIGIR conference on Research and development in informaion retrieval. ACM, 2003.

- [3] Carneiro, Gustavo, et al. ”Supervised learning of semantic classes for image annotation and retrieval.” Pattern Analysis and Machine Intelligence, IEEE Transactions on 29.3 (2007)

- [4] Li, Jia, and James Ze Wang. ”Real-time computerized annotation of pictures.” Pattern Analysis and Machine Intelligence, IEEE Transactions on 30.6 (2008): 985-1002.

- [5] Guillaumin, Matthieu, et al. ”Tagprop: Discriminative metric learning in nearest neighbor models for image auto-annotation.” Computer Vision, 2009 IEEE 12th International Conference on. IEEE, 2009.