Welcome MCL New Member Yaqi Shao

1. Could you briefly introduce yourself and your research interests?

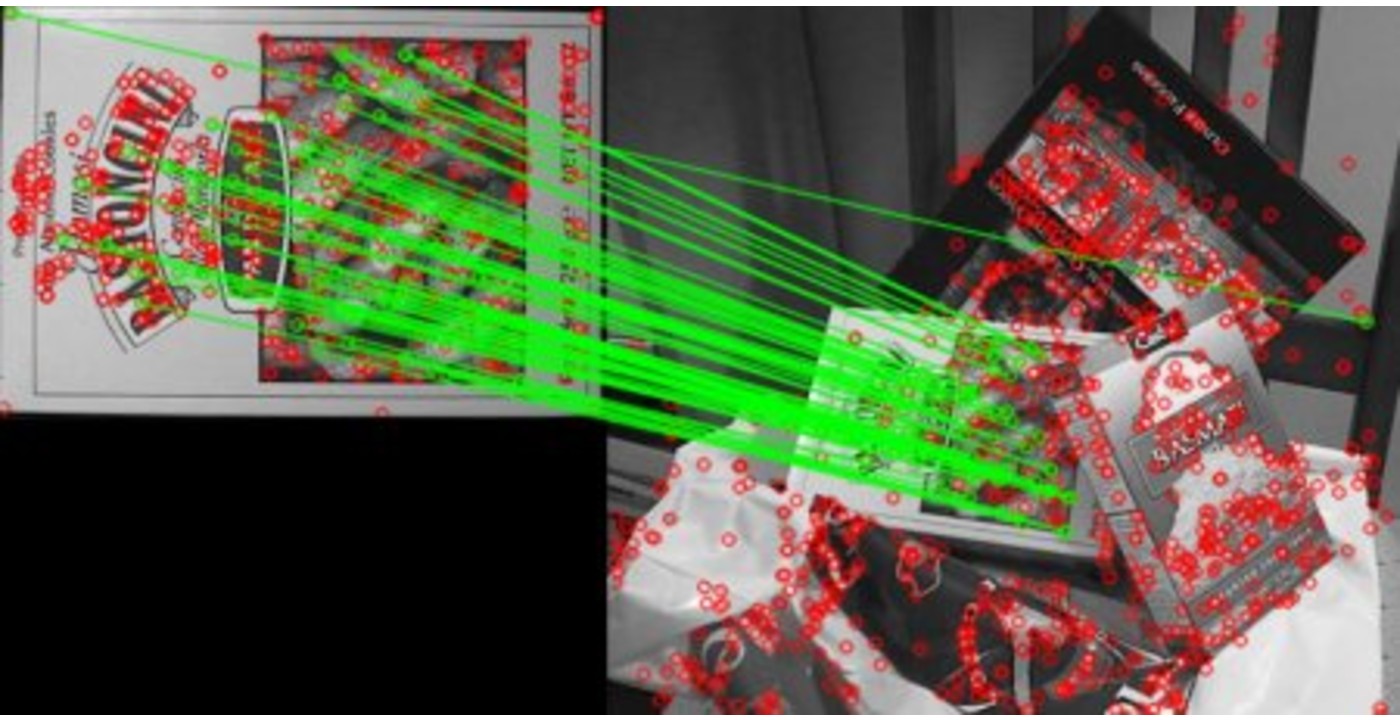

My name is Yaqi Shao. I graduated from Zhejiang Gongshang University with a B.S. degree in Computer Science and Technology. Now, I am pursuing a master’s degree in Computer Science at University of Southern California. I joined Media Communications Lab as an intern in Spring 2020. In the past, my research experience concentrates on image processing like image defogging and image enhancement. In MCL, I think I will have an opportunity to broaden my experience including face recognition and deep learning.

2. What is your impression about MCL and USC?

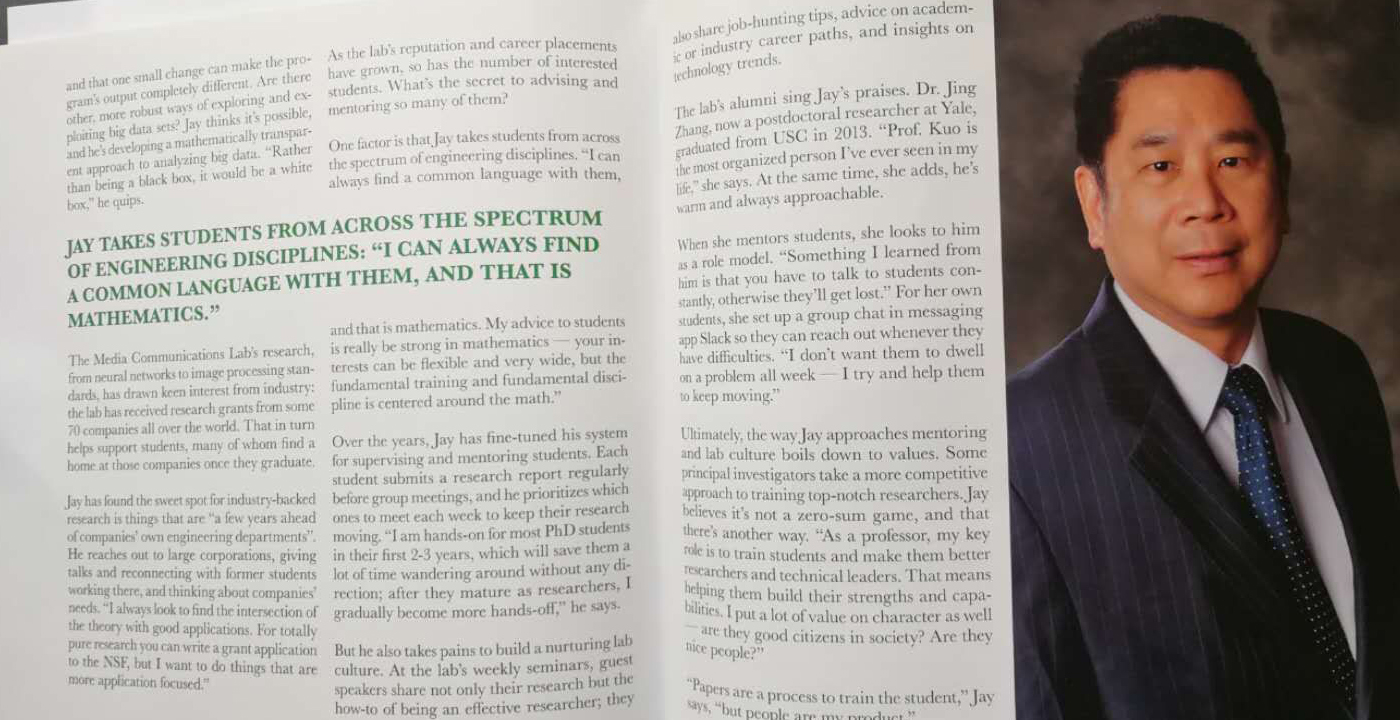

USC is an internationally renowned research university and rank in top 5% of the country for EE program. The Viterbi school focus on technology and help students becoming the specialist in their field. I took EE569 as an elective course under the instruction of Prof. Kuo whose hard-working and persistent spirit impressed me deeply. All the members in MCL takes continuous effort, working in groups to help each other.

3. What is your future expectation and plan in MCL?

I will continue working on face recognition and deep learning this summer with the group. As a new intern, I think I will learn a lot from Prof. Kuo and the PhD students not only in research methods but also in work methodology. I am very interested with image processing and deep learning and hope to experience related research more.