MCL Research on Domain Adaptation

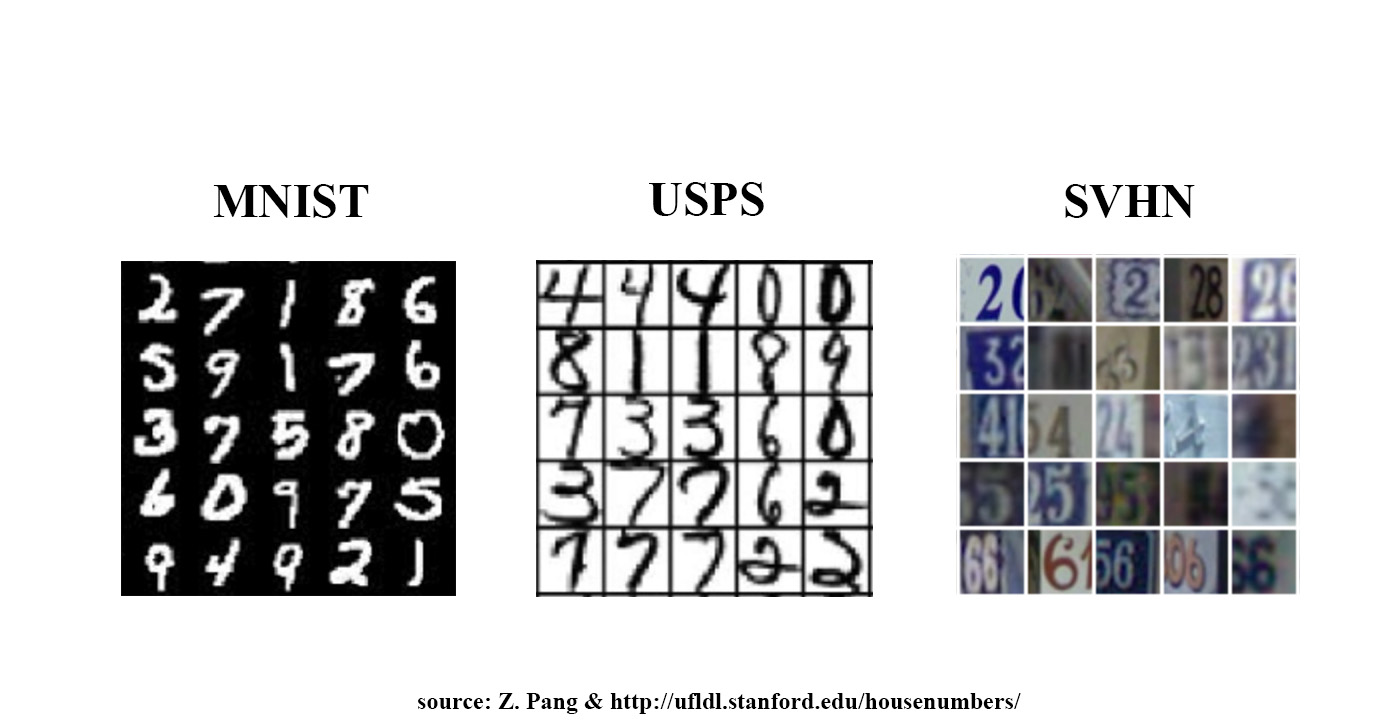

Domain Adaptation is a sort of transfer learning, which is aimed to learn a model from source data distribution and apply to the target data of different distribution. Basically, the tasks in source and target domains are the same, such as both are image classification task or both are image segmentation task. There are three types of domain adaptation, differing in how many target samples are labeled with ground truth labels. In the supervised domain adaptation and the semi-supervised domain adaptation, all or part of target data is labeled respectively, while all target data is unlabeled in the unsupervised domain adaptation.

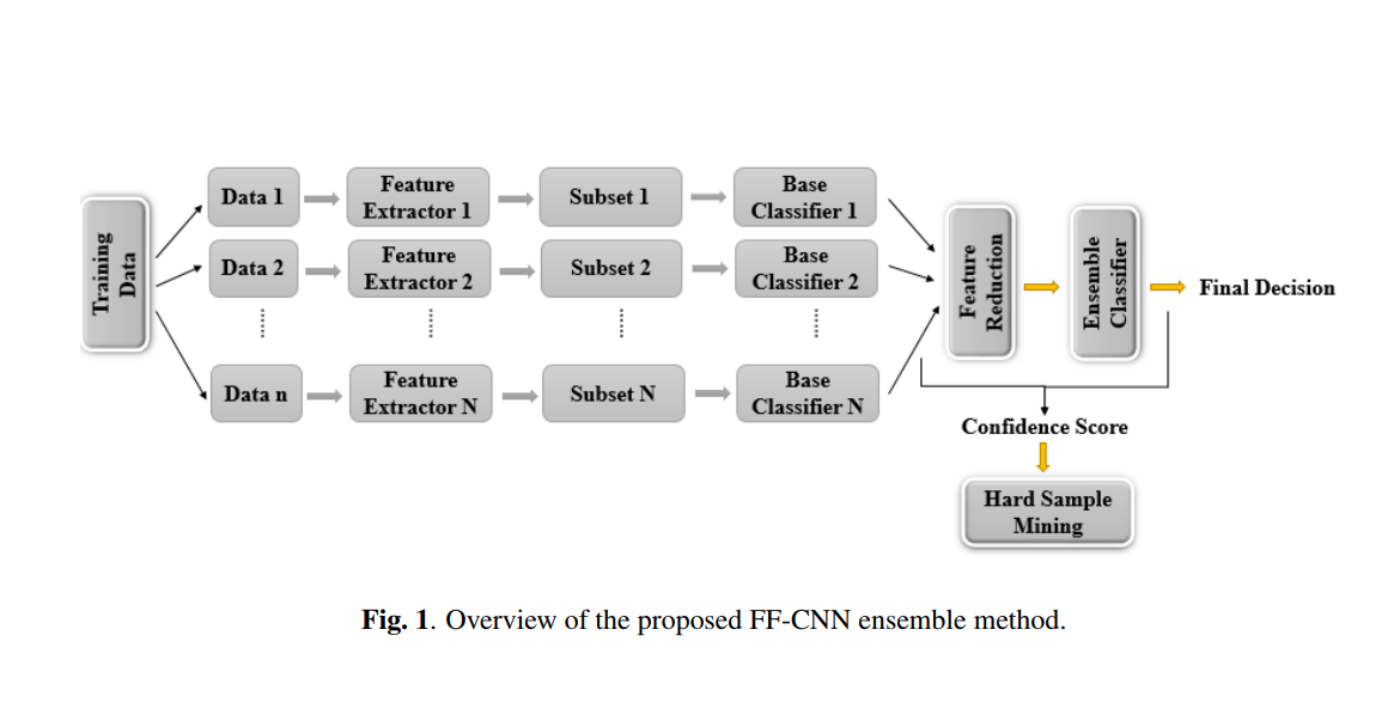

There are several classical methods supposed to solve domain shift problems by feature alignment in the unsupervised domain adaptation. [1] maps data of source and target domains into one subspace learned by reducing the distribution distance measured by maximum mean discrepancy. [2] aligns eigenvectors of two domains by learning a linear mapping function. [3] utilizes geometric and statistical changes between source and target domain to build an infinite number of subspaces and integrates them together. With the increasing popularity of deep learning, there are plenty of methods[4,5,6] utilize CNN or GAN in domain adaptation. But those methods demand a high computation cost due to back-propagation and GAN related methods are unstable in training. Besides, generalizability from one domain to the other is weak in deep learning based methods.

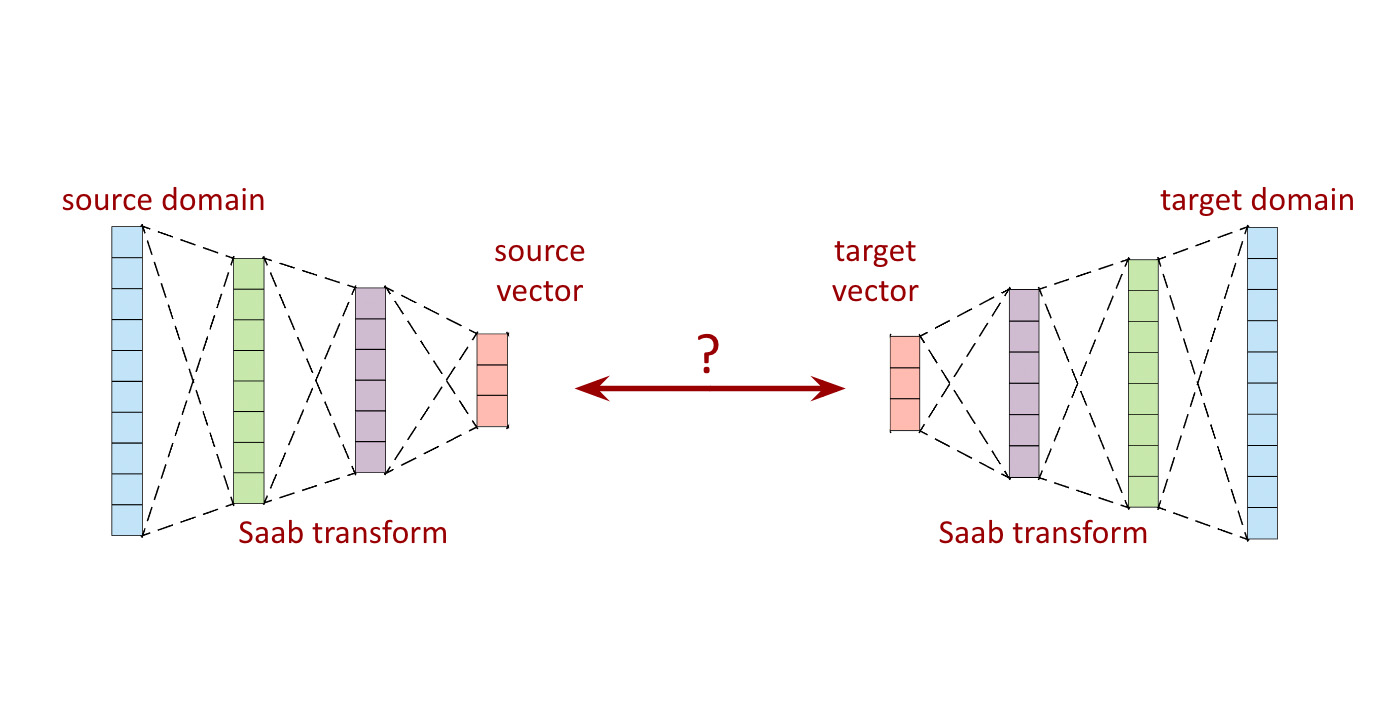

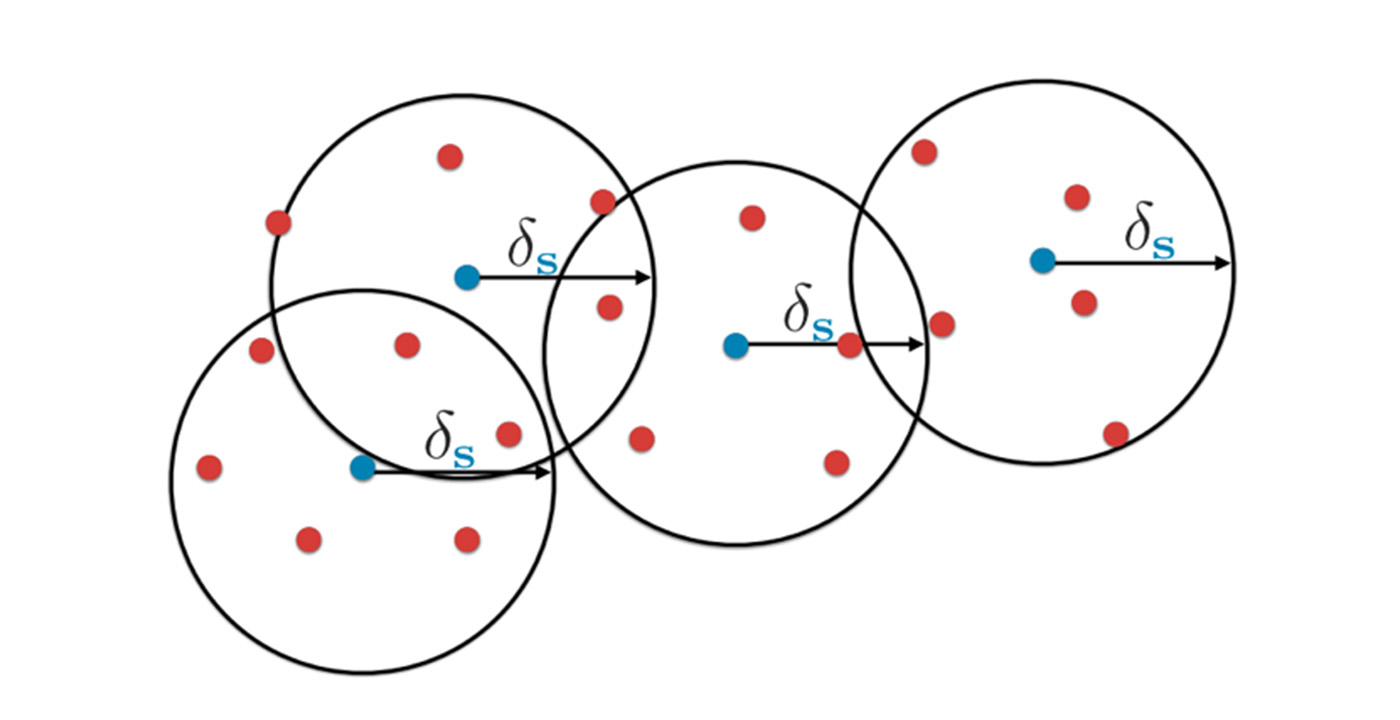

Professor Kuo proposed several explanations on explainable deep learning since 2014. The Saak and Saab transform gives a way to extract feature representation of images and original images can be reconstructed from the feature representation through inverse transform. This gives us a new way to handle domain adaptation task. We are now working on aligning Saab features [...]