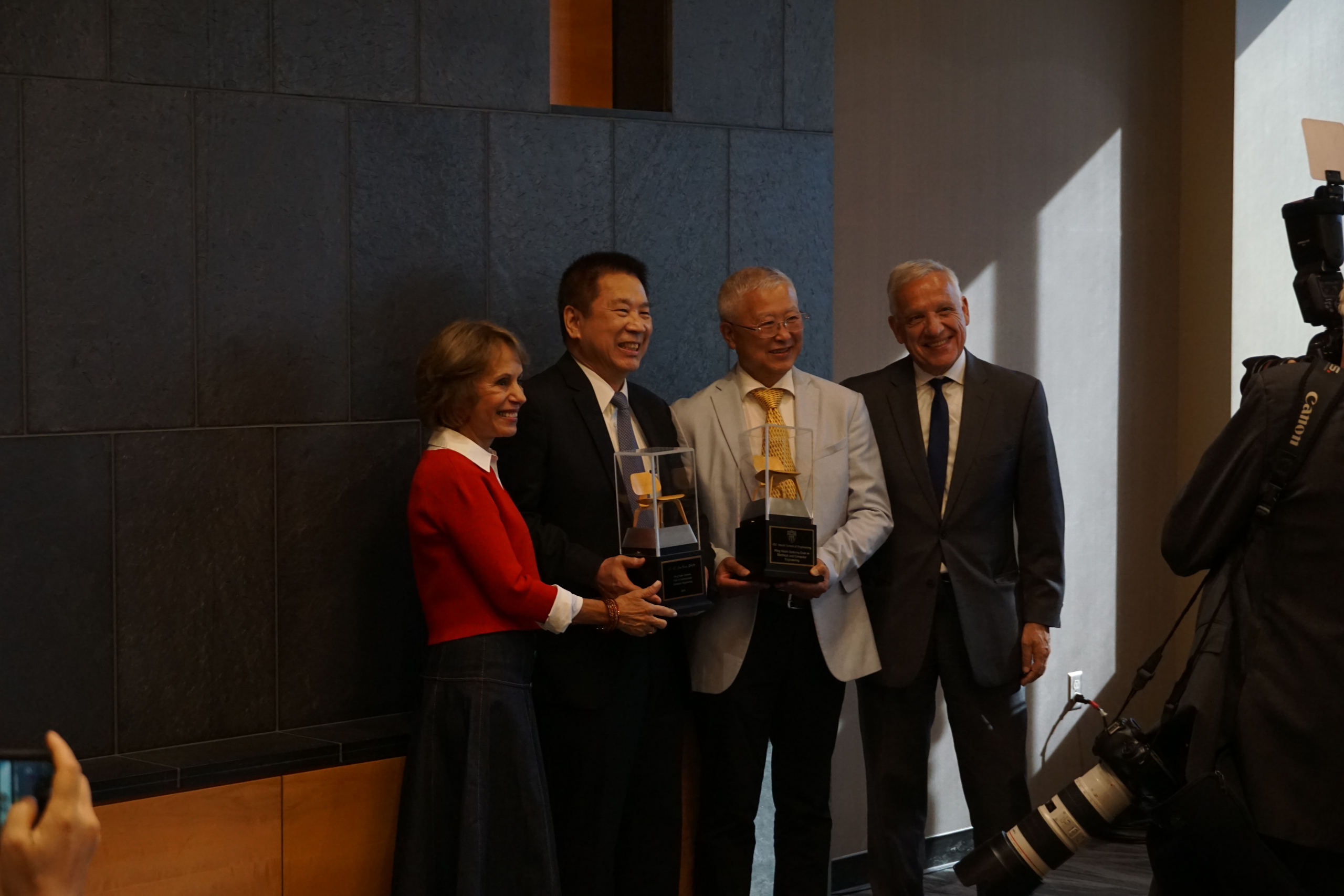

Congratulations to Professor Kuo for Receiving NTU Distinguished Alumni Award

MCL Director, Professor C.-C. Jay Kuo, received the Distinguished Alumni Award from his Alma Mater, National Taiwan University (NTU), at its 96 th Anniversary Ceremony on November 15 (Friday), 2024, in Taipei, Taiwan. The university was founded in 1928 during Japanese rule as the seventh of the Imperial Universities. The university comprises 11 colleges, 56 departments, 133 graduate institutes, and 60 research centers.

Professor Kuo studied as an undergraduate in the Electrical Engineering Department at NTU from 1976 to 1980. He said, “NTU provided an excellent environment for me to make good friends, explore new things, and build academic background, so I became more mature and independent. It was a memorable period of time in my life.” Professor Kuo received this honor for his contributions to multimedia technologies. He added, “I want to share this honor with all of my students and my family. Their love, trust, and efforts make this award possible. I am very grateful.”