The visual tracking problem has a long history and has diverse applications in video surveillance, smart traffic system, autonomous driving cars and so on. Deep learning methods have gradually dominated the online single object tracking field because of the superior tracking accuracy. However, they usually require training on tremendous labeled videos which are expensive and time-consuming to acquire.

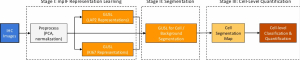

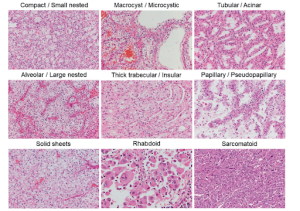

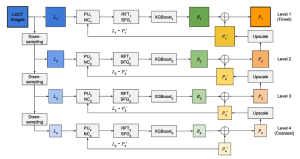

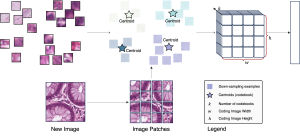

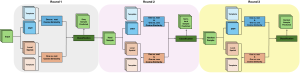

We proposed an explainable self-supervised salient-point-based approach to track general objects in real time, by utilizing attention and features from both the spatial domain and the temporal domain. There are two major parts in our tracking system: tracking adjacent frames by matching salient points which represent spatial attention, and utilizing temporal information storing in salient points across different frames to identify loss of object or appearance change. In both parts, the salient point plays an important role in capturing spatial-temporal information. Here the feature of a salient point comes from the concatenation of two hop layer features in two-stage channel-wise Saab. The first hop contains PCA information of local patches at high resolution, while the second hop works at a lower resolution with larger receptive field, thus naturally forming a multi-resolution feature extractor which help capture unusual patterns that we should pay more attention to during tracking.

We have got some preliminary results on the current framework. We evaluate our method on the long-term tracking benchmark TB-50 [1] where the used metrics include success plots and precision plots in one pass evaluation (OPE) mode. This dataset includes 50 video sequences and 29491 frames in total. Mean success rate indicates the average overlapping ratio between the prediction and the ground truth, while mean precision rate shows how close their centers are. The higher the two values are, the better the tracker is. Our current mean success rate is 0.4342 and the mean precision rate is 0.59, which is comparable or even better than some classical conventional methods such as KCF [2]. We will continue refining the tracking system to get better results.

References

[1] Wu, Yi, Jongwoo Lim, and Ming-Hsuan Yang. “Online object tracking: A benchmark.” Proceedings of the IEEE conference on computer vision and pattern recognition. 2013.

[2] Henriques, João F., et al. “High-speed tracking with kernelized correlation filters.” IEEE transactions on pattern analysis and machine intelligence 37.3 (2014): 583-596.

By Zhiruo Zhou