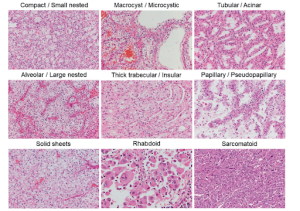

The objective of image-to-image (I2I) translation involves learning a mapping from a source domain to

a target domain. Specifically, it aims at transforming images of the source style to those of the target

style with content consistency. While there is a domain gap, it can be mitigated by aligning the

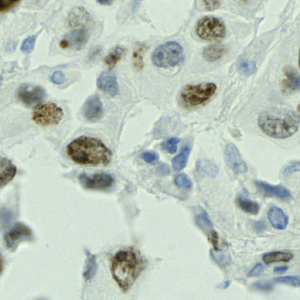

distributions of the source and the target domains. Nevertheless, disparities between class distributions

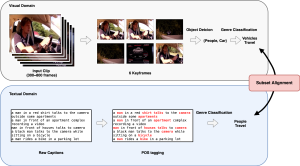

of the source and target domains result in semantic distortion (see Figure 1); namely, different

semantics of correspondent regions between input and output. The semantic distortion could potentially

impact the efficacy of downstream tasks, such as semantic segmentation or object classification.

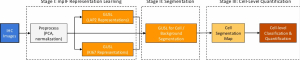

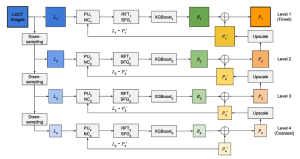

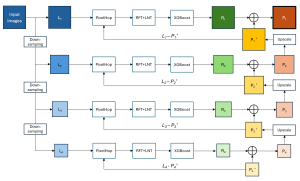

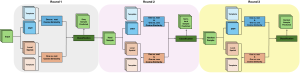

In this work, we propose a novel contrastive learning-based method that alleviates semantic

distortion by ensuring semantic consistency between input and output images. This is achieved by

enhancing the inter-dependence of structure and texture features between input and output by

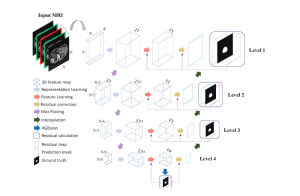

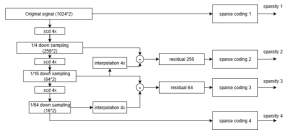

maximizing their mutual information. In addition, we exploit multiscale predictions to boost the

I2I translation performance by employing global context and local detail information jointly to

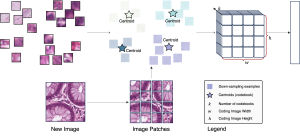

predict translated images of superior quality, especially for high-resolution images. Hard negative

sampling is also applied to reduce semantic distortion by sampling informative negative samples.

For brevity, we refer to our method as SemST. Experiments conducted on I2I translation across

various datasets demonstrate the state-of-the-art performance of the SemST method. Additionally,

utilizing refined synthetic images in different UDA tasks confirms its potential for enhancing the

performance of UDA.

MCL Research on Enhanced Image-to-Image Translation

Share This Story, Choose Your Platform!

About the Author: Mahtab Movahhedrad

Mahtab Movahhedrad received her B.S. and M.S. degree in Electrical Engineering from the University of Tabriz and Tehran polytechnics, Iran, respectively. She is currently a Ph.D. student in the Department of Electrical Engineering, University of Southern California, advised by Professor Kuo. She joined Media Communications Lab in Fall 2021. Her research interests include image processing, computer vision, and Machine learning.