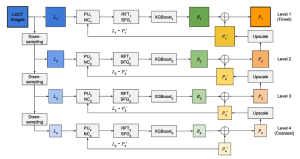

Knowledge graph embedding has been intensively studied in recent years. TransE, RotatE, and PairRE are the most representative and effective among distance-based KG embeddings that make use of simple translation, rotation, and scaling operations respectively to represent relations between entities. These models use simple geometric transformations yet achieve relatively good performance in link prediction. However, it is unclear how to leverage the strength of these individual operations and produce a better embedding model. It turns out that we can treat each operation as a basic building block, from which we can build the most effective KG embedding scoring functions. The composition of geometric operators is a well-established tool for image manipulation. It is natural to apply the same strategy to combine translation, rotation, and scaling operators in the 3D space. Our previous work CompoundE has demonstrated that the composition of affine operations can be an effective strategy in designing KG embedding. We are interested to know whether we can achieve even better results by cascading 3D operators.

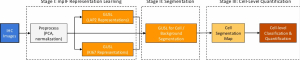

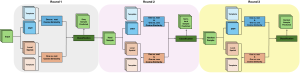

We propose to extend our previous work by including more affine operations other than only translation, rotation, and scaling in our framework, and moving from 2D transformations to 3D transformations. In addition, we propose to enhance our CompoundE by addressing two critical issues. First, although Compounding operations lead to plenty of model variants, it is still unclear how to systematically search for a scoring function that performs the best for an individual dataset. We propose to use beam search to gradually build more complex scoring functions from simple but effective variants. To speed up the model tuning during the search, we use the pre-trained entity embedding from base models as initialization. Second, although ensemble learning is a popular strategy, it remains under-explored when it comes to learning KG embedding and performing KG completion tasks. To boost link prediction performance, we propose to leverage unsupervised rank aggregation functions to unify rank predictions from individual model variants. Rank aggregation helps to boost the ranking of ground truth and reduce the impact of outliers.

– Xiou Ge

References:

[1] X. Ge, Y. C. Wang, B. Wang, C.-C. J. Kuo, “CompoundE: Knowledge Graph Embedding with Translation, Rotation and Scaling Compound Operations.” Available: https://arxiv.org/abs/2207.05324

[2] Bordes A, Usunier N, Garcia-Duran A, Weston J, Yakhnenko O. “Translating embeddings for modeling multi-relational data. Advances in neural information processing systems.” 2013;26. Available: https://proceedings.neurips.cc/paper/2013/file/1cecc7a77928ca8133fa24680a88d2f9-Paper.pdfrot