Video object tracking is one of the fundamental computer vision problems. It finds rich applications in video surveillance, autonomous navigation, robotics vision, etc. Given a bounding box on the target object at the first frame, a tracker has to predict object box locations and sizes for all remaining frames in online single object tracking (SOT). The performance of a tracker is measured by accuracy (higher success rate), robustness (automatic recovery from tracking loss), computational complexity and speed (a higher number of frames per second of FPS).

Online trackers can be categorized into supervised and unsupervised ones. Supervised trackers based on deep learning (DL) dominate the SOT field in recent years. DL trackers offer state-of-the-art tracking accuracy, but they do have some limitations. First, a large number of annotated tracking video clips are needed in the training, which is a laborious and costly task. Second, they demand large memory space to store the parameters of deep networks due to large model sizes. Third, the high computational power requirement hinders their applications in resource-limited devices such as drones or mobile phones. Advanced unsupervised SOT methods often use discriminative correlation filters (DCFs) which could run fast on CPU with Fast Fourier Transform and has extra small model size. There is a significant performance gap between unsupervised DCF trackers and supervised DL trackers. It is attributed to the limitations of DCF trackers such as failure to recover from tracking loss and inflexibility in object box adaptation.

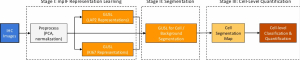

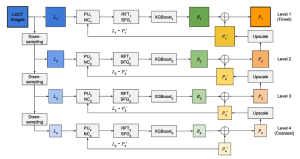

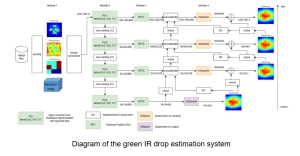

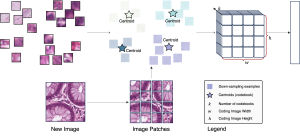

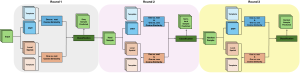

To address the above issues with a green solution, previously we proposed UHP-SOT (Unsupervised High-Performance Single Object Tracker) which used STRCF as the baseline and incorporated two new modules – background motion modeling and trajectory-based object box prediction. Our new work UHP-SOT++ is an extension of UHP-SOT, with a more systematic and well justified fusion strategy and more extensive experiment results on both small- and large-scale datasets. It has more robust and accurate performance and runs top among all unsupervised methods, operating at a rate of 20 FPS on an i5 CPU even without code optimization. It is ideal for real-time object tracking on resource-limited platforms.

–Zhiruo Zhou