We would like to share the good news that our group has two papers accepted by BMVC 2017 from Qin Huang and Siyang Li. This year BMVC is more competitive than before, according to the conference committee. We are happy that our group successfully has two papers, especially with one as oral presentation.

–Qin Huang, Chunyang Xia, Chihao Wu, Siyang Li, Ye Wang, Yuhang Song and C.-C. Jay Kuo, “Semantic Segmentation with Reverse Attention” (Oral)

–Siyang Li, Xiangxin Zhu, Qin Huang, Hao Xu and C.-C. Jay Kuo, “Multiple Instance Curriculum Learning for Weakly Supervised Object Detection” (Poster)

Here are the Obstructs for the two papers:

“Semantic Segmentation with Reverse Attention”:

Obstruct: Recent development in fully convolutional neural network enables efficient end-to-end learning of semantic segmentation. Traditionally, the convolutional classifiers aretaught to learn the representative semantic features of labeled semantic objects. In thiswork, we propose a reverse attention network (RAN) architecture that trains the net-work to capture the opposite concept (i.e., what are not associated with a target class) aswell. The RAN is a three-branch network that performs the direct, reverse and reverse-attention learning processes simultaneously. Extensive experiments are conducted toshow the effectiveness of the RAN in semantic segmentation. Being built upon theDeepLabv2-LargeFOV, the RAN achieves the state-of-the-art mean IoU score (48.1%)for the challenging PASCAL-Context dataset. Significant performance improvementsare also observed for the PASCAL-VOC, Person-Part, NYUDv2 and ADE20K datasets.

“Multiple Instance Curriculum Learning for Weakly Supervised Object Detection”:

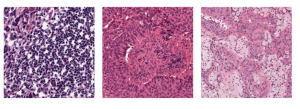

Obstruct: When supervising an object detector with weakly labeled data, most existing ap- proaches are prone to trapping in the discriminative object parts, e.g., finding the face of a cat instead of the full body, due to lacking the supervision on the extent of full objects. To address this challenge, we incorporate object segmentation into the detector training, which guides the model to correctly localize the full objects. We propose the multiple in- stance curriculum learning (MICL) method, which injects curriculum learning (CL) into the multiple instance learning (MIL) framework. The MICL method starts by automat- ically picking the easy training examples, where the extent of the segmentation masks agree with detection bounding boxes. The training set is gradually expanded to include harder examples to train strong detectors that handle complex images. The proposed MICL method with segmentation in the loop outperforms the state-of-the-art weakly su- pervised object detectors by a substantial margin on the PASCAL VOC datasets.

Congratulations again on the two papers accepted by BMVC 2017.