Dr. Rouhsedaghat, a MCL alumna graduated last Summer, recently presented a work[1] on image synthesis related to her PhD thesis in AAAI-23. Here is the presentation summary from Dr. Rouhsedaghat:

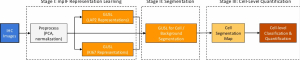

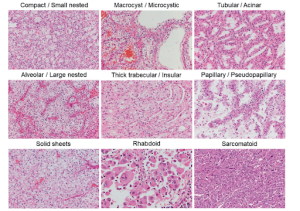

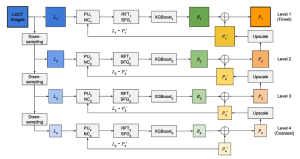

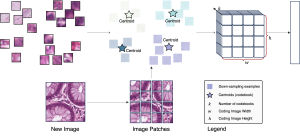

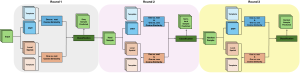

We offer a method for one-shot mask-guided image synthesis that allows controlling manipulations of a single image by inverting a quasi-robust classifier equipped with strong regularizers. Our proposed method, entitled MAGIC , leverages structured gradients from a pre-trained quasi-robust classifier to better preserve the input semantics while preserving its classification accuracy, thereby guaranteeing credibility in the synthesis. Unlike current methods that use complex primitives to supervise the process or use attention maps as a weak supervisory signal, MAGIC aggregates gradients over the input, driven by a guide binary mask that enforces a strong, spatial prior. MAGIC implements a series of manipulations with a single framework achieving shape and location control, intense non-rigid shape deformations, and copy/move operations in the presence of repeating objects and gives users firm control over the synthesis by requiring to simply specify binary guide masks. Our study and findings are supported by various qualitative comparisons with the state-of-the-art on the same images sampled from ImageNet and quantitative analysis using machine perception along with a user survey of 100+ participants that endorse our synthesis quality.

[1]Rouhsedaghat, Mozhdeh, et al. “MAGIC: Mask-Guided Image Synthesis by Inverting a Quasi-Robust Classifier.” arXiv preprint arXiv:2209.11549 (2022).